How much process do you need to run a software development team? Or a technical organisation in general? Some? Lots? None? Maybe you’d opt for the likes of ITIL and Prince2? Or something lighter weight and more pragmatic like Scrum? What about testing of software? How much should you do?

I do not claim to know the answers to these questions and obviously the answers depend a lot on your team, your organisation and the product you are developing. However I have noticed widely differing attitudes towards testing amongst engineers. The two angles:

Test Everything – Process wins

How can you be sure that you are releasing working code unless you test it? You’ll need to do user acceptance testing at a minimum, preferably system/integration testing too. If your application requires it then performance testing, security testing and penetration testing. Ideally all developers should be utilising test driven development and writing suites of unit tests that cover their code 100%. Ideally all of this stuff will be automated but would have at least one round of manual testing too to ensure the tests themselves are correct (testing the tests)..

When code is changed you can never be sure what might be affected so you’ll have to run all the tests every time new code is prepared for release. Code must be tested first by the developers in a dev environment, then pass to a testing or integration environment where most tests are ran again. Then finally everything is released to a live-like pre-production environment where regressions testing is performed. Then you can put the code live.

Test Nothing – Heroics win

How can I be sure that I’m releasing working code? Because I’ve checked it in my dev environment and it works. I have thought about the edge cases and defensively coded around them. I have had to refactor some things but I am confident in my approach and there should be no affect on the refactored code. I know my code will still be as secure and scalable as the previous release because I haven’t written anything that could introduce any issues. When I release this code I will monitor the production system carefully for unforeseen errors. Because I have access to the production environment and a quick lightweight release procedure I can easily rollback if things go horribly wrong. Any minor bugs that are found in live I can fix later.

Everyone in my team knows the entire codebase just as well as I do so we can all be confident in each other’s ability to code and release like this. When we have new starters we spend a long time training them and gradually easing them into doing releases that increase in complexity with their level of experience.

Which test strategy is for me?

Obviously if I were developing a core accounts system for a bank the heroic method probably isn’t going to work too well. Conversely if I were developing a low-traffic company intranet the process approach would be overkill.

I have seen both approaches work within the same team. The heroics approach is wonderfully efficient when it works as there is very little to get in the way of writing useful functional code and getting it released. You can achieve a staggering throughput with relatively few people. It can be scary doing releases but it’s probably worth it in order to get more stuff out the door.

The process based approach gives you a lot of confidence in the code you are releasing; heart-in-mouth moments and failed releases are almost non-existent, bugs are reduced in scale and severity, sleeping at night is easier! Once the effort has been put in to writing tests it is fairly simple to then automate them. Running the full suite of tests every time someone commits a change to the code allows you to get rapid feedback on whether it has affected anything else and seeing a green report that all tests have passed gives you a lot of confidence. When paired with manual testing you gain even more confidence that your tests are valid and appropriate.

The heroic approach totally relies on having very technically strong, multi-skilled developers. As soon as even an average developer enters the team and tries to play the same game, problems start to appear. Anyone who cannot adequately assess the impact of their code changes will encounter problems, big problems. It also doesn’t scale very well as it relies on everyone knowing about all areas of your systems. Not only does this experience take a long time to gather but it becomes exponentially harder as the team grows: The more team members you have, the more code you produce, and the longer it takes to gain experience of it. As the team grows you’ll be spending more time learning existing code and less time writing new code, or more likely you’ll ignore the learning bit and just write more code. The team becomes less effective, bugs and outages increase.

The process approach requires a great effort put into testing. Writing unit tests, integration tests, user acceptance tests, however much you want to do. Writing tests that give good coverage is a significant effort and in some cases may be comparable to the effort to actually write the code in the first place, perhaps even more. In order to be able to rely on your automated tests to give you confidence you have to make sure that all new code has good automated test coverage and you have to maintain all your existing tests too. If you fall behind maintaining decent coverage the value of your test back-catalogue is eroded and you lose confidence in your tests; effectively defeating the purpose of having them in the first place.

Clearly it isn’t a case of picking one or the other option, there are as many shades of grey between those extremes as there are development teams in the world. The main point is to think where on the spectrum your team should sit. This will depend upon many things such as:

- How risk averse your company/team is

- Stability requirements of your product

- Size and make up of the team

- Maturity of the team

- Strength and style of developers in the team

It is useful to bear in mind this spectrum when responding to production failures. When you release something that experiences problems and you inevitably get asked the question “what are you going to do to stop this happening again”, the answer you might naturally turn to is “do more testing”. However in this case it may well be better to convince stakeholders that it would be more beneficial to take this sort of thing on the chin and to do less testing, not more.

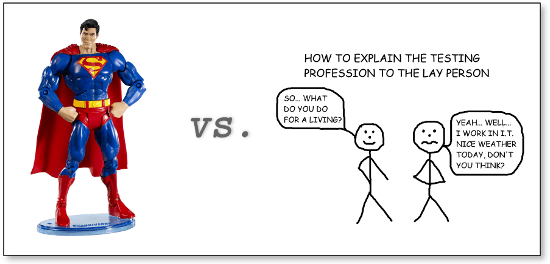

Thanks to Cartoon Tester for the testing cartoon 🙂

I really am against the “test nothing” strategy. There are many developers out there who think that their job is only to code – they don’t even have to do any testing (like any testing whatsoever). Their only testing is to ensure that they don’t see any errors and the functions are returning the “supposed results”.

Exactly. With those people the heroic approach simply will not work. For it to work you need people with not only a high level of skill bit a wide breadth of knowledge and a strong sense of responsibility for the systems they work on. “Throw it over the wall” narrow-minded types are going to be bad news regardless of what testing strategy you have in place!

Well said that man. Big Finance is a sod for this kind of thing – trying to sell Agile / TDD / CI into a provider of real-time stock-market FX trading was one of my more challenging positions. And code-only developers can eat my shorts – there’s far too many of those still about too.